Libbybot - a presence robot with Chromium 51, Raspberry Pi and RTCMultiConnection for WebRTC

Edit, July 2017: I've put detailed instructions and code in github. You should follow those if you really want to try it (more).

I've been working on a cheap presence robot for a while, gradually and with help from buddies at hackspace and work. I now use it quite often to attend meetings, and I'm working with Richard on ways to express interest, boredom and other emotions at a distance, expressed using physical motion (as well as greetings and 'there's someone here') .

I've noticed a few hits on my earlier instructions / code, so thought it was worth updating my notes a bit.

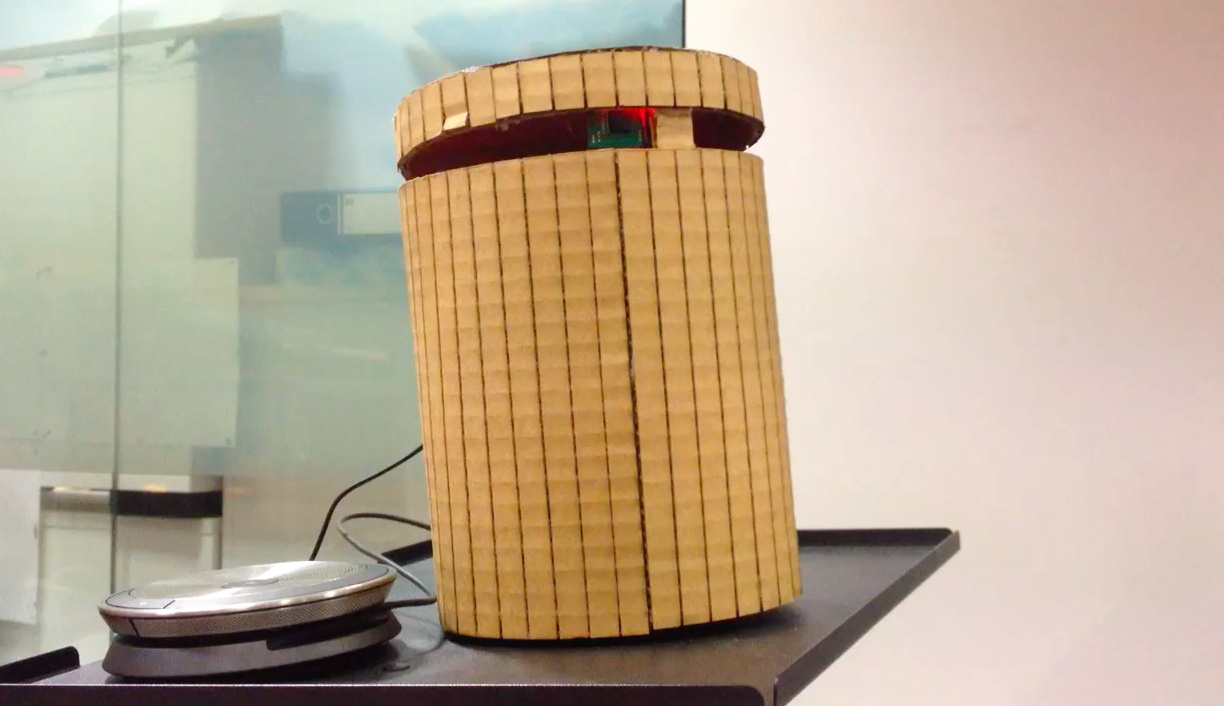

The main hardware change is that I've dispensed with the screen, which in its small form wasn't very visible anyway. This has led to a rich seam of interesting research around physical movement: it needs to show that someone is there somehow, and it's nice to be able to wave when someone comes near. It's also very handy to be able to move left and right to talk to different people. It's gone through a "bin"-like iteration, where the camera popped out of the top, a "The Stem"-inspired two sticks version (one stick for the camera, one to gesture), and is now a much improved IKEA ESPRESSIVO lamp hack with the camera and gesture "stick" combined again. People like this latest version much more than the bin or the sticks, though I haven't yet tried it in situ. Annoyingly the lamp itself is discontinued, a pity because it fits a Pi3 (albeit with a right angled power supply cable) and some servos (using servos on the Pi directly with ServoBlaster) rather nicely.

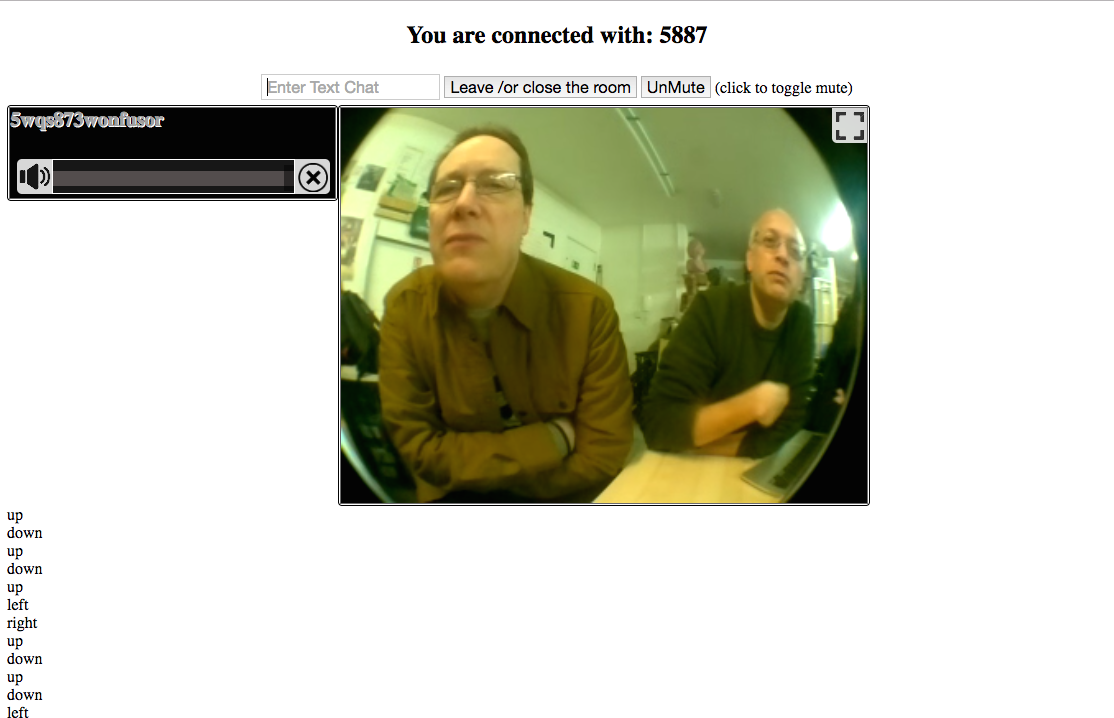

The main software change is that I've moved it from EasyRTC to RTCMultiConnection, because I couldn't get EasyRTC to work with data+audio (rather than data+audio+video) for some reason. I like RTCMultiConnection a lot - it's both simple and flexible (I get the impression EasyRTC was based on older code while RTCMultiConnection has been written from scratch). The RTCMultiConnection examples and code snippets were also easier to adapt for my own, slightly obscure, purposes.

I've also moved from using a Jabra speaker / mic to a Sennheiser one. The Jabra (while excellent for connecting to laptops for improving the sound on Skype and similar meetings) was unreliable on the Pi, dropping down to low volume and with the mic periodically not working with Chromium (even when used with a powered USB hub). The Sennheiser one is (even) pricer but much more reliable.

Hope that helps, if anyone else is trying this stuff. I'll post the code sometime soon. Most of this guide still holds.